Managing Anthropic Agent SDK Costs: A Post-June 15 Billing Playbook

Anthropic moves Agent SDK calls into a $100/mo credit pool on June 15. Here's the two-phase mitigation I shipped: a billing cap plus a provider router.

Your background agents are about to run out of money. Anthropic's new credit pool system means your automation could die in a single week. Here is how I re-engineered my stack to stay under budget without breaking my workflows.

The Setup

You've built a small fleet of agents. They sort your mail, watch your repos, file your daily briefings.

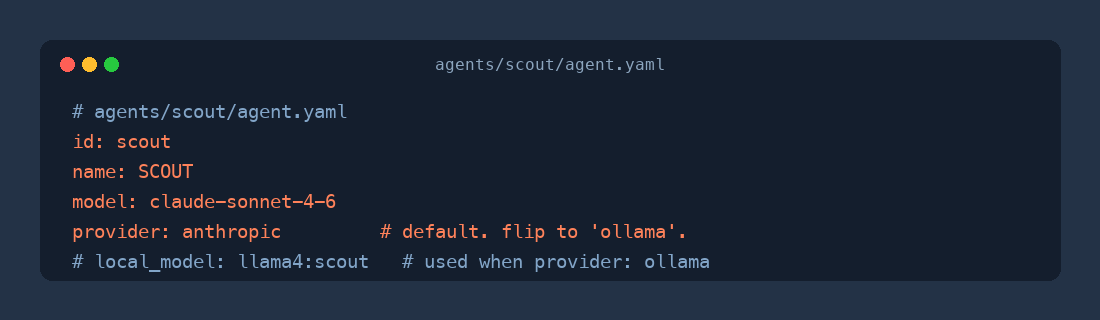

My current setup before the June 15th cutover:

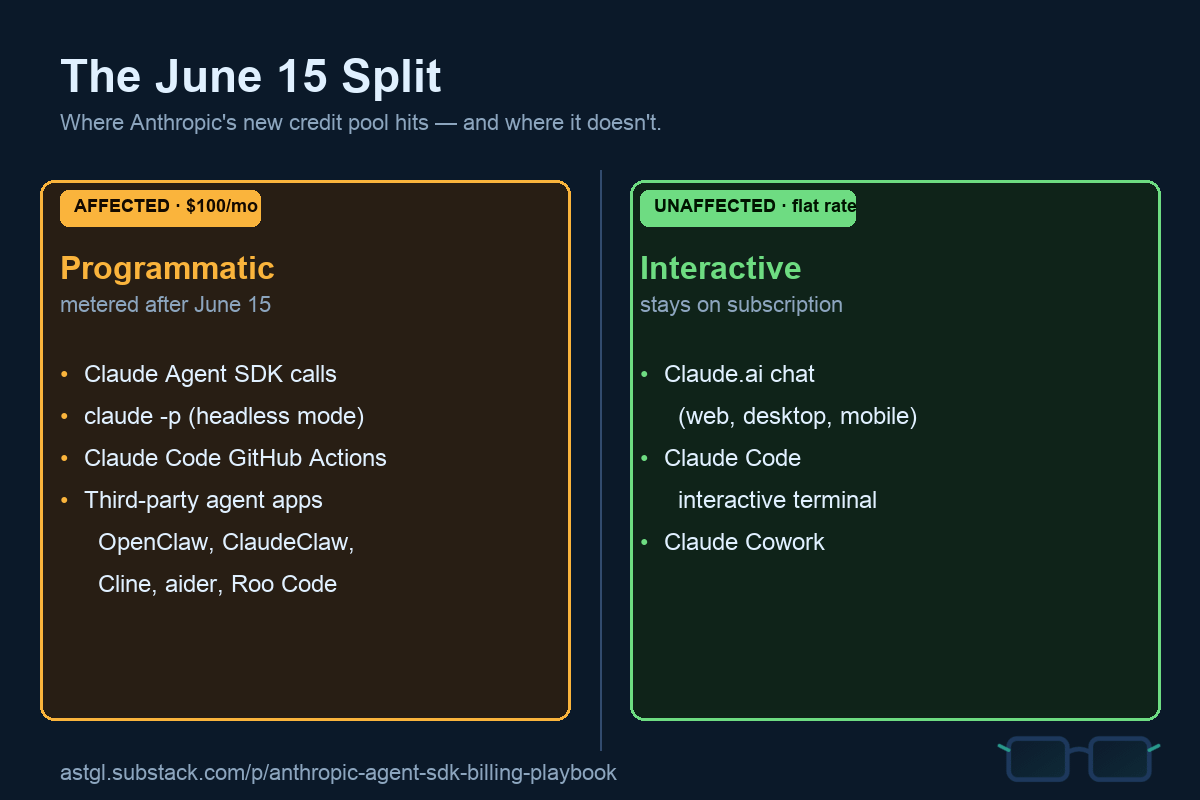

Then May 13 lands, and Anthropic announces the change: on June 15, every programmatic Claude call moves into a metered monthly credit pool. $100 a month on Max 5x. No rollover.

Run the math against your actual schedule. If you've got anything polling on the order of minutes (cron pipelines, hourly digests, watchdog sweeps), that pool drains in 7 to 10 days. And here's the kicker. Your interactive Claude Code keeps working. Your headless automation just stops. You wake up to a dead pipeline, a drained pool, and a subscription that still says active.

What's Actually Going On

This isn't just a random pricing tweak. There is a clear economic driver here. Throughout early 2026, many third-party tools used the Agent SDK at a $20 Pro subscription rate to run workloads that would cost hundreds at standard API rates. It was essentially compute arbitrage at scale.

Anthropic started cracking down in April, but the May 13 announcement is the structural fix. They are moving to dedicated monthly credit pools to restore access under metered billing. The reality is that most agentic operating systems are built directly on the Agent SDK. Because these agents lack a human in the loop to throttle their usage, they are now metered by default. Interactive sessions stay on the flat-rate subscription because the human provides the natural brake. Programmatic agents do not.

The Fix

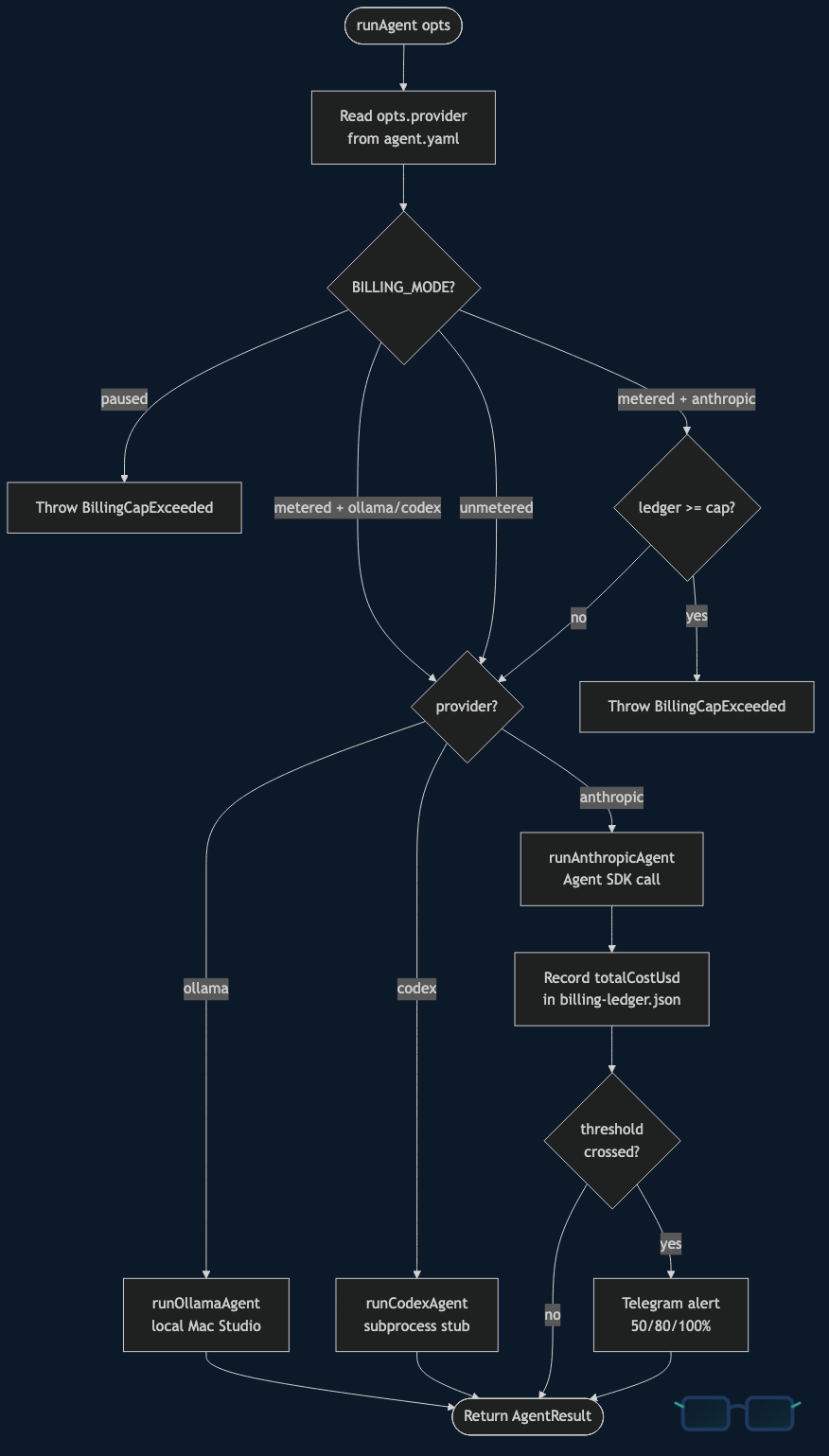

I implemented a two-phase mitigation to deploy before the June 15 deadline.

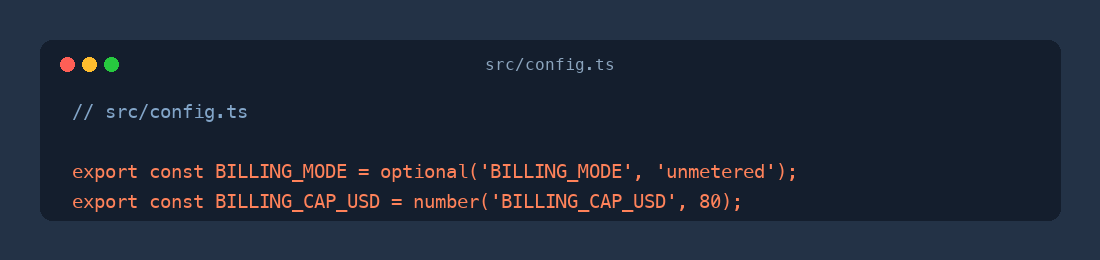

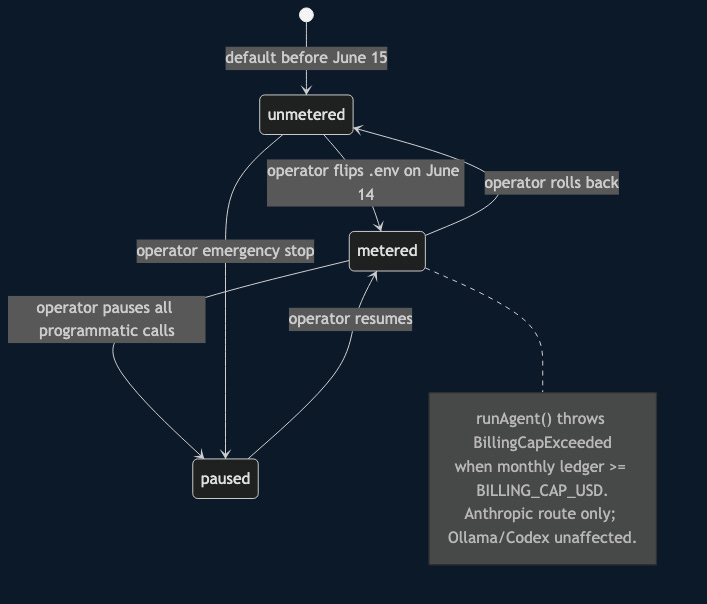

Phase 1 was a hot patch designed to provide immediate protection. I added a BILLING_MODE environment variable with three states: unmetered, metered, and paused. The paused state blocks every programmatic call across all providers, while metered enforces a strict cap on the Anthropic route.

I also added a file-backed JSON ledger at store/billing-ledger.json to track monthly costs. It uses a write-then-rename pattern to ensure crash safety during updates. To handle errors, I introduced a BillingCapExceeded error class. I used the same instanceof pattern as my KillSwitchRefusal logic so a typo in a message cannot accidentally trigger a retry loop.

The logic lives in a single chokepoint: runAgent() in src/agent.ts. The pre-call gate checks the cap, and the post-call gate records result.totalCostUsd from the SDK, firing a Telegram alert if a threshold is crossed. As a final safety measure, I cut the cadence on my two highest-frequency tasks: the pipeline-advance cron moved from 15 minutes to hourly, and I paused the council-evening task entirely under metered mode.

// src/config.ts — tri-state env that gates programmatic agent calls

export const BILLING_MODE = optional('BILLING_MODE', 'unmetered');

export const BILLING_CAP_USD = number('BILLING_CAP_USD', 80);// src/agent.ts — pre-call gate in the dispatcher

function assertBillingAllowed(provider: Provider): void {

if (BILLING_MODE === 'paused') {

throw new BillingCapExceeded(

'BILLING_MODE=paused — programmatic agent calls are disabled.',

);

}

if (provider === 'anthropic' && BILLING_MODE === 'metered') {

const total = getMonthlyTotal();

if (total >= BILLING_CAP_USD) {

throw new BillingCapExceeded(

`Anthropic monthly credit cap reached: $${total.toFixed(2)} >= $${BILLING_CAP_USD.toFixed(2)}.`,

);

}

}

}

export async function runAgent(opts: AgentOptions): Promise<AgentResult> {

assertEnabled('AGENTS_ENABLED');

const provider: Provider = opts.provider ?? 'anthropic';

assertBillingAllowed(provider);

if (provider === 'ollama') return runOllamaAgent(opts);

if (provider === 'codex') return runCodexAgent(opts);

return runAnthropicAgent(opts);

}Phase 2 focuses on the long-term router infrastructure. I promoted runAgent() from a direct SDK caller to a dispatcher that can route across anthropic, ollama, and codex providers. I also extended the agent.yaml schema with provider: and local_model: fields.

I shipped a single-turn Ollama runner that wraps the local-LLM client. It returns totalCostUsd: 0 and a model tag like ollama:llama4:scout. I deliberately avoided tool calls in this initial version to keep the scope small.

# agents/<name>/agent.yaml — new fields, validated at load

id: scout

name: SCOUT

model: claude-sonnet-4-6

provider: anthropic # default. flip to 'ollama' to route locally.

# local_model: llama4:scout # used when provider: ollamaTo be honest, I did not actually flip any agents to Ollama in this specific PR. The agents I need to move, like STEWARD or WATCHMAN, execute Bash and SQLite queries. A local runner without tool-call support would break them silently. Building a proper tool-call shim takes a few more days, but the cadence reduction and the billing breaker alone are enough to keep my spend under $80 per month.

Why This Matters

Every person using an agent OS is in the same boat. Whether you use ClaudeClaw, Cline, Aider, or Roo Code, the underlying SDK is the same, and the June 15 cliff is approaching. The playbook I used generalizes: you need one chokepoint, one ledger, and one way to audit your cadence.

We also need to be honest about workload requirements. Tasks like editorial review or complex code deliberation still justify the Sonnet price tag. However, simple tasks like classification, routing, or summarization run perfectly fine on a local model with zero metered cost. The router infrastructure makes this migration a simple config flip rather than a massive code refactor.

Finally, this reflects where the industry is heading. OpenAI has used usage-based pricing for a long time, and GitHub Copilot is moving toward credit pools. In the next year, more vendors will split consumption between interactive flat-rate plans and programmatic metered usage. Building this abstraction now means you won't have to scramble the next time a vendor changes their terms.

Quick Reference

Single Chokepoint: Ensure every agent call flows through one function. This turned a three-week refactor into a one-week job.

Cadence over Architecture: Reducing task frequency (e.g., 15m to 1h) cuts spend faster than migrating to local models.

Ship the Breaker First: Implement the cost ledger and the

BillingCapExceedederror as insurance before you attempt the complex provider migration.

# The cutover, June 14: flip the env, restart, reseed, smoke-test

BILLING_MODE=metered

BILLING_CAP_USD=80

# then

launchctl kickstart -k gui/$(id -u)/com.claudeclaw.app

npm run pipeline -- schedule-advance

npm run schedule -- pause council-eveningFound this useful? I share practical lessons from my systems engineering journey at As The Geek Learns