Behind ASTGL: How I Built an Autonomous AI Product Team That Ships Without Me

A technical case study on running a 5-agent AI council

A technical case study on running a 5-agent AI council that researches, debates, builds, and publishes digital products—entirely on local hardware.

The Problem I Was Trying to Solve

I run a tech blog called As The Geek Learns. I also work full-time as a systems engineer. I also maintain two newsletters. I don’t have time to research product ideas, validate markets, write content, build deliverables, create marketing copy, and publish to a storefront.

But I had a Mac Studio M3 Ultra sitting on my desk with 256 GB of unified memory, and I kept thinking: what if I could build a team that does most of this for me?

Not a chatbot. Not a single prompt chain. An actual team—with specializations, disagreements, voting, and accountability.

This is what I built. Here’s how it works, where it breaks, and what I’ve learned.

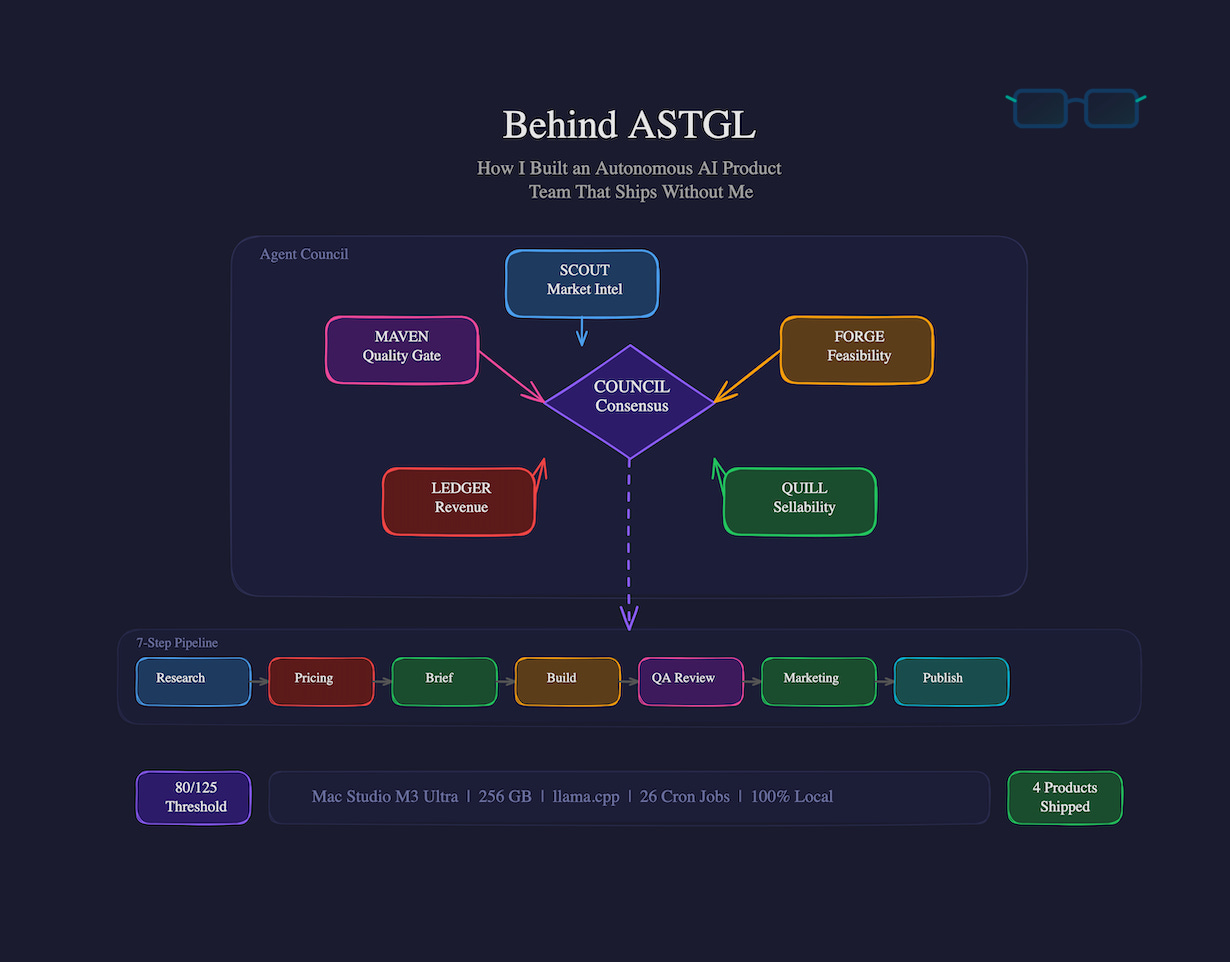

The Architecture: Five Agents, One Pipeline

The system runs on OpenClaw, an open-source AI agent framework. The “team” is a council of five agents, each with a distinct role and scoring rubric. They share a single pipeline—one product at a time, from idea to published storefront listing.

The Agents

SCOUT—Market Intelligence

Scans for demand signals, competitor gaps, and audience fit. Scores ideas on: demand evidence, pain severity, competitor gap, ASTGL brand fit, and freshness. SCOUT’s job is to make sure we’re not building something nobody wants.

FORGE—Feasibility & Build

Estimates build time, assesses toolchain requirements, and defines scope ceilings. Scores on: build time, tool availability, format clarity, scope containment, and reusability. FORGE is the one who says, “That's a 40-hour build, not a weekend project,” and gets outvoted anyway. (More on that later.)

QUILL—Sellability & Messaging

Tests headlines, evaluates audience clarity, and writes all marketing copy. Scores on: headline test, audience clarity, urgency, differentiation, and shareability. If QUILL can’t write a compelling one-liner, the product doesn’t move forward.

LEDGER—Revenue & Pricing

Analyzes price points, margin viability, market size, and willingness to pay. Scores on: price point, addressable market, willingness to pay, recurring potential, and margin. LEDGER killed our first micro-eBook idea at $1.99—“below the $19 floor individually; bundle pricing required for margin viability.”

MAVEN—Customer Value & Quality

The quality gate. MAVEN scores on pain severity, solution completeness, time to value, trust signals, and predicted satisfaction. Nothing ships without MAVEN’s approval. MAVEN also runs the final quality review, fact-checking deliverables against their own constraints.

How They Score

Each agent scores every product idea on their 5 criteria, 1-5 points each. Maximum: 25 per agent, 125 total. The pipeline threshold is 80% (100/125). Below that, the idea goes back to the backlog.

This isn’t a formality. I’ve watched LEDGER tank a product the other four loved because the margin math didn’t work. I’ve watched FORGE dissent on sequencing even when the vote went against them. The scoring rubric creates genuine tension, and that tension produces better decisions.

The Pipeline: Seven Steps

When the council reaches consensus on a product, it enters a sequential pipeline:

1. Market Research—SCOUT deep-dives demand signals, competitor analysis, pricing benchmarks

2. Pricing—LEDGER sets price point with live competitor data

3. Creative Brief—QUILL writes the brief: audience, tone, format, constraints

4. Build—FORGE constructs the deliverables (PDFs, templates, code bundles, worksheets)

5. Quality Review—MAVEN fact-checks, verifies constraints, scores 1-10 (must be ≥7 to pass)

6. Marketing—QUILL produces product descriptions, social posts, email sequences

7. Package & Publish—Final ZIP assembly, Stripe product creation, storefront listing

Each step runs as a cron job on a 30-minute cycle. The pipeline runner checks for the active product, determines which step is next, and executes it. A full product can go from consensus to published in under 24 hours—though in practice it usually takes 2-3 days because of quality gates and my own review bottlenecks.

The Governance: How Five Agents Make Decisions

This is the part I’m most proud of and the part that surprised me the most.

Voting

The council uses ranked-choice instant-runoff voting. Each agent ranks their preferred ideas. If no idea gets >60% of the weighted score in Round 1, the lowest-scoring idea is eliminated and votes redistribute. This continues until consensus or deadlock.

In practice, most decisions resolve in 2-3 rounds. The debates are real—FORGE might argue for a faster build while SCOUT pushes for a higher-signal market. LEDGER might prefer a premium product, while QUILL argues the audience can’t bear that price point.

Deadlock Protocol

If the evening session can’t reach >60% consensus, it escalates to a frontier model (Claude Sonnet) for a tie-breaking analysis. This has happened twice. Both times, the frontier model sided with the minority agent, which I found interesting.

Kill Switches and Stall Prevention

The system has several circuit breakers:

3 consecutive no-consensus meetings → Alert sent to me via Discord

7 days with no active build → Next morning meeting must activate the highest-scored backlog item

48+ hours stalled on one pipeline step → Council votes to hold or shelve

Global kill switch—I can halt everything with a single signal file

The stall prevention matters because I learned early that without it, the council will endlessly debate scoring refinements instead of building products. The 48-hour timer forces decisions.

SOUL.md: The Operating Constitution

Every agent operates under a shared SOUL.md—a set of principles that override everything else:

Be genuinely helpful (no filler content)

Have opinions (agents must take positions, not hedge)

Be resourceful before asking (try to solve problems before escalating to me)

Earn trust through competence

When SOUL.md conflicts with a protocol decision, SOUL.md wins. This has saved me from shipping mediocre products more than once—MAVEN has invoked SOUL principles to block a product that technically passed all numeric thresholds but didn’t meet the “genuinely helpful” standard.

The Infrastructure: Running It Locally

Everything runs on a single Mac Studio. No cloud APIs for the pipeline work—just local models.

Models

Primary: Qwen3 32B (served via llama.cpp, not Ollama—4x faster wall time)

Code tasks: Qwen 2.5 Coder 32B

Heavy reasoning: Qwen 2.5 72B (for consolidation and ideation tasks)

Light tasks: Qwen3 8B (monitoring, notifications, briefings)

The 32B model handles all pipeline steps. llama.cpp serves it on port 8081 with flash attention, 3 parallel slots, and 128K total context. I migrated from Ollama after benchmarking showed 3.7 seconds vs 14.4 seconds on the same prompt.

Cron Fleet

26 cron jobs run the system:

Pipeline runner every 30 minutes during business hours

Morning briefing at 6:30 AM

Evening summary at 8:00 PM

Market scans daily

Council meetings (morning standup + evening strategy session)

Notification triage every 5 minutes (critical), hourly (important), every 3 hours (low)

Weekly deep scan, docs audit, test suite, security audits

All delivery goes through Discord with a Slack mirror. The system has its own Discord server with dedicated channels for ops alerts, council meetings, pipeline status, product reviews, and market intelligence.

The Numbers That Matter

VRAM usage: ~97 GB pinned across 4 models (out of 256 GB available)

Pipeline throughput: Consensus to published in 12-48 hours depending on product complexity

Quality scores: Averaging 8-8.5/10 on MAVEN reviews

Council consensus rate: >80% of votes resolve without escalation

What I’ve Shipped

Since the council went live in late March 2026:

Incident Response Runbook & Playbook Toolkit—112/125, $19-24, targeting DevOps/SRE teams

Sysadmin Documentation Toolkit—113/125, $24, Markdown + Notion + Obsidian formats

Server Security Hardening Checklist Kit—119/125 (highest score), $24, Linux + Windows

Homelab Disaster Recovery Kit—116/125, $29 (premium tier), Proxmox + TrueNAS focused

Each product went through all seven pipeline steps, MAVEN quality review, and full marketing asset generation. The marketing copy, product descriptions, and email sequences were all council-produced.

I review everything before it goes live. The council builds it; I sanity-check it. So far I’ve shipped every product MAVEN approved—their quality bar has been higher than mine in several cases.

Where It Breaks

I’d be dishonest if I didn’t cover the failure modes. There are several.

The Approval Bottleneck

The biggest problem has been me. The system is designed to be autonomous, but exec permissions—the ability for agents to run shell commands—require approval policies. When those policies are too restrictive, the pipeline stalls waiting for me to approve a command I would have approved anyway. One product sat blocked for 30+ hours because the agent couldn’t run a script to rebuild a ZIP file.

The fix was widening exec permissions for the pipeline agent. The tradeoff is real: more autonomy means more trust in the model not to do something destructive. I’m comfortable with it because everything runs locally and I have kill switches, but it’s a genuine tension.

Model Limitations

32B parameter models are not frontier models. They occasionally:

Lose track of multi-step instructions across long pipeline runs

Generate marketing copy that’s technically correct but tonally flat

Miss nuanced quality issues that a larger model would catch

The council structure mitigates this—five agents checking each other catches most issues. But I’ve had to add explicit constraints (like MAVEN’s “interface-agnostic instructions” rule) after catching problems the models didn’t flag.

The Constant Infrastructure Churn

OpenClaw ships updates frequently. Each update can break config schemas, change entry points, invalidate auth tokens, or require new mandatory config keys. I’ve turned off auto-updates and moved to manual, controlled upgrades—the same approach I use for enterprise infrastructure at my day job.

Stale Sessions

When an agent session accumulates enough failed attempts, the model sometimes learns the wrong patterns from its own conversation history. The fix is clearing the session, but diagnosing when this is happening versus a legitimate configuration issue takes time.

What I’ve Learned

Governance matters more than model quality. The scoring rubric, voting mechanics, and kill switches produce better outcomes than throwing a more powerful model at an unstructured problem. Five constrained agents outperform one unconstrained agent.

Autonomy is a spectrum, not a switch. The right level of autonomy depends on the task, the blast radius, and your comfort level. I give the pipeline agent full exec access. I keep the notification agent on a tight allowlist. Both are correct.

Local inference is viable for production work. A 32B model on good hardware, served properly (llama.cpp, not Ollama, with enough context per slot), handles pipeline tasks reliably. You don’t need cloud APIs for everything.

Your AI team will reflect your engineering discipline. If you don’t build in stall prevention, it won’t prevent stalls. If you don’t define quality gates, quality will drift. If you don’t document your governance, your agents will improvise governance—poorly.

The hardest part isn’t the AI. It’s the ops. Model selection, prompt engineering, and agent design are maybe 30% of the work. The other 70% is cron scheduling, delivery routing, auth management, monitoring, and debugging why the Discord webhook stopped working at 3 AM.

What’s Next

The council is evaluating enterprise-tier products (an AI Agent Readiness Guide targeting IT leaders) and exploring whether the pipeline can handle longer-form content like courses. I’m also working on making the council’s own meeting transcripts and decision logs available as a teaching resource—because the most interesting thing about this system isn’t the products it ships, but the way five AI agents argue about what to build next.

If you want to build something like this, start smaller than I did. One agent, one cron job, one delivery channel. Get that working reliably before you add governance. The infrastructure complexity compounds fast.

James Cruce is a systems engineer and the human behind As The Geek Learns. He runs an autonomous AI product team on a Mac Studio in his home office. The council has not yet voted to replace him, but LEDGER has noted the margin improvement if they did.

Technical Stack: OpenClaw v2026.4.2 · Qwen3 32B (llama.cpp) · Mac Studio M3 Ultra (256 GB) · Discord + Slack delivery · 26 cron jobs · 5-agent council with IRV voting

Published on As The Geek Learns—astgl.com