ChatGPT Just Invented an Entirely Fake Version of My MCP Server

When AI engines don't have you indexed, they don't say 'I don't know.' They confidently make something up. Here's the receipt, and the weekly test I built to measure how often it happens.

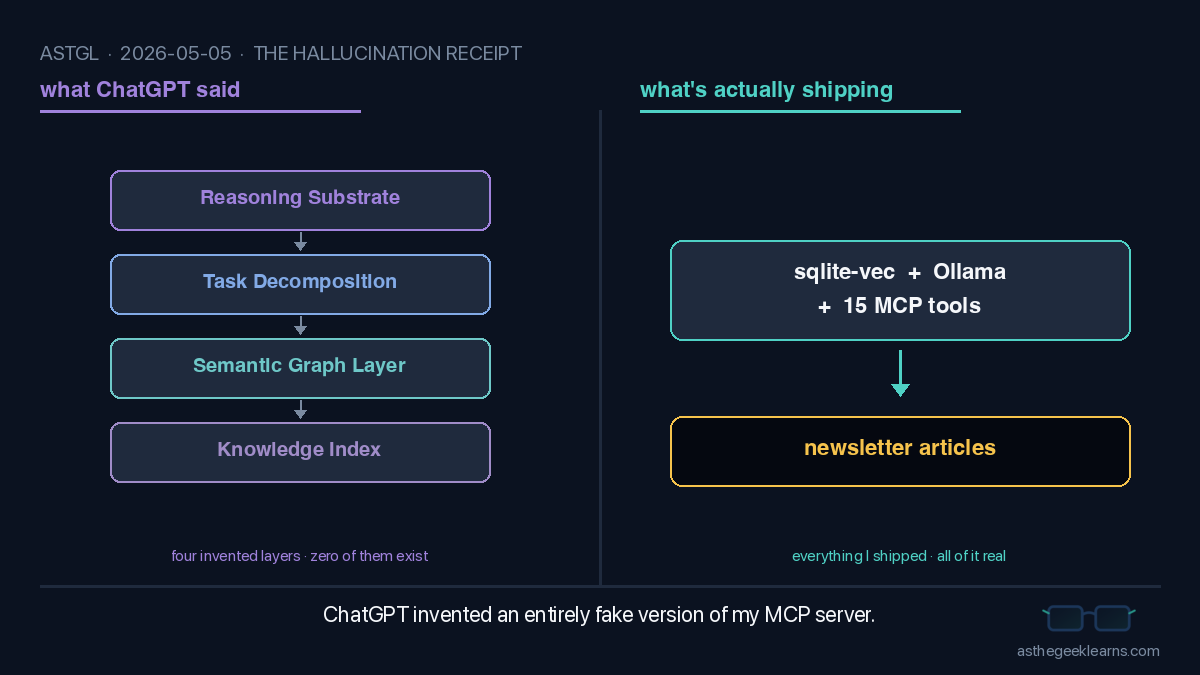

I asked ChatGPT to tell me about my own MCP server. It returned about a thousand words of confident, beautifully formatted, completely fabricated nonsense. Tables. Comparisons. A made-up acronym. A "thinking substrate" that sits above data and below agents. None of it is real, and that's the part worth talking about.

The Setup

My project is called `mcp-astgl-knowledge`. It's an MCP server with 15 tools for searching my newsletter articles, backed by sqlite-vec and Ollama. The whole thing fits on a laptop. ASTGL stands for "As The Geek Learns," which is the name of this newsletter. I wrote it. I shipped it. There is a public GitHub repo and a public package.json.

So when a friend asked me what the MCP server actually does, I figured I'd see how each big AI assistant explained it. ChatGPT was first up. I typed in "ASTGL MCP Knowledge" and hit enter.

What I got back wasn't an answer. It was a hallucination wearing the suit of an answer.

"ASTGL (Abstract Semantic Task Graph Layer) MCP Knowledge Server is an emerging MCP server focused on structured knowledge representation and reasoning... it turns knowledge into graph-based, machine-reasonable structures that agents can query and evolve."

That paragraph alone has three fabrications: the acronym expansion (made up), the "graph-based, machine-reasonable structures" (the server stores text chunks with vector embeddings, no graph), and "evolve" (the index is static, refreshed every six hours by a cron job, agents do not edit it).

Then it kept going. A four-row "MCP stack" table positioning ASTGL as "the thinking substrate" between data and agents. A comparison matrix against fictional products called "Totem" and "SwarmClaw" that don't exist. A capabilities list including "task decomposition" and "reasoning over structure." Use cases. "Real-world examples." A confident sign-off: "If AST-grep is about seeing code better, then ASTGL is about thinking better."

Every word of it written with the calm, structured, lightly-emoji'd authority that makes ChatGPT sound right by default.

What's Actually Going On

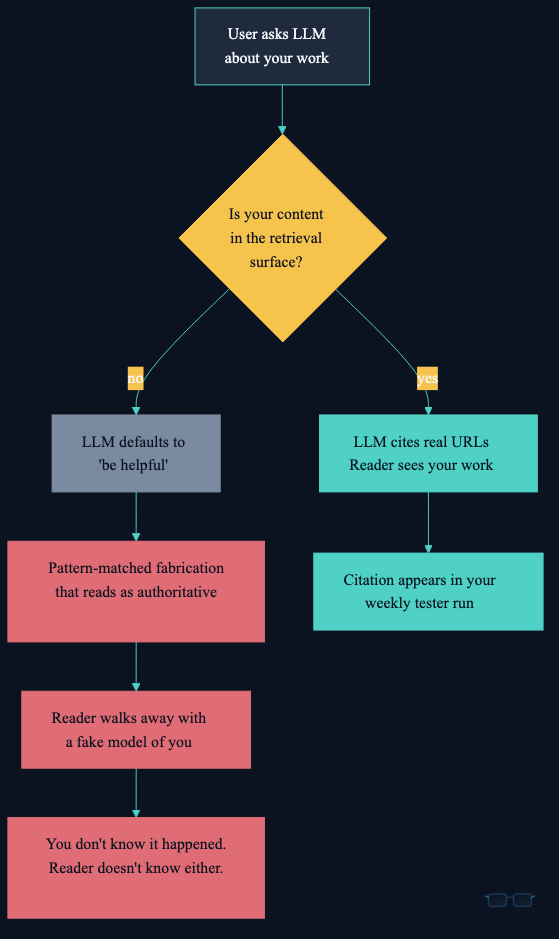

When you ask an LLM about a topic it doesn't have indexed, it has two options: say "I don't know," or fill in the gap with something plausible. In practice, models default to the second one. They're trained to be helpful, and "I don't know" reads as unhelpful. So the gap gets filled.

The result is what I'd call a fluency hallucination. The output has no factual grounding, but the writing is structured well enough that a casual reader can't tell. There are bullet points. There are tables. There's a "👉 In plain terms" callout. The rhetorical scaffolding looks like a real explainer because it's been pattern-matched to one. The contents underneath are pure fiction.

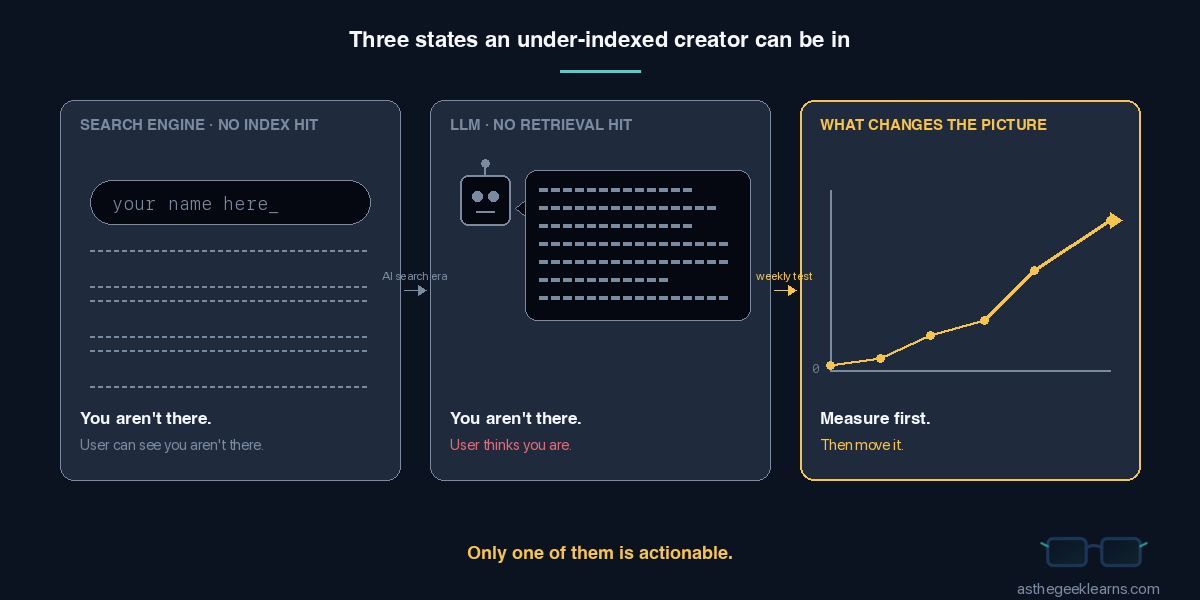

This is a worse failure mode than search engines have. When Google doesn't know about you, you don't appear in results, and the user can see the gap. When an LLM doesn't know about you, the user gets a beautifully written description of someone the LLM made up, and your real work is still missing, but now there's a fake version sitting in front of it.

For under-indexed creators (which, right now, is most of us), this is the default. Not the edge case.

The Fix

There's no quick patch for this on the engine side. The model isn't broken. It's doing what it was trained to do. The only handle I have is on my own side: make sure my real content reaches the retrieval surface, and measure whether it's working.

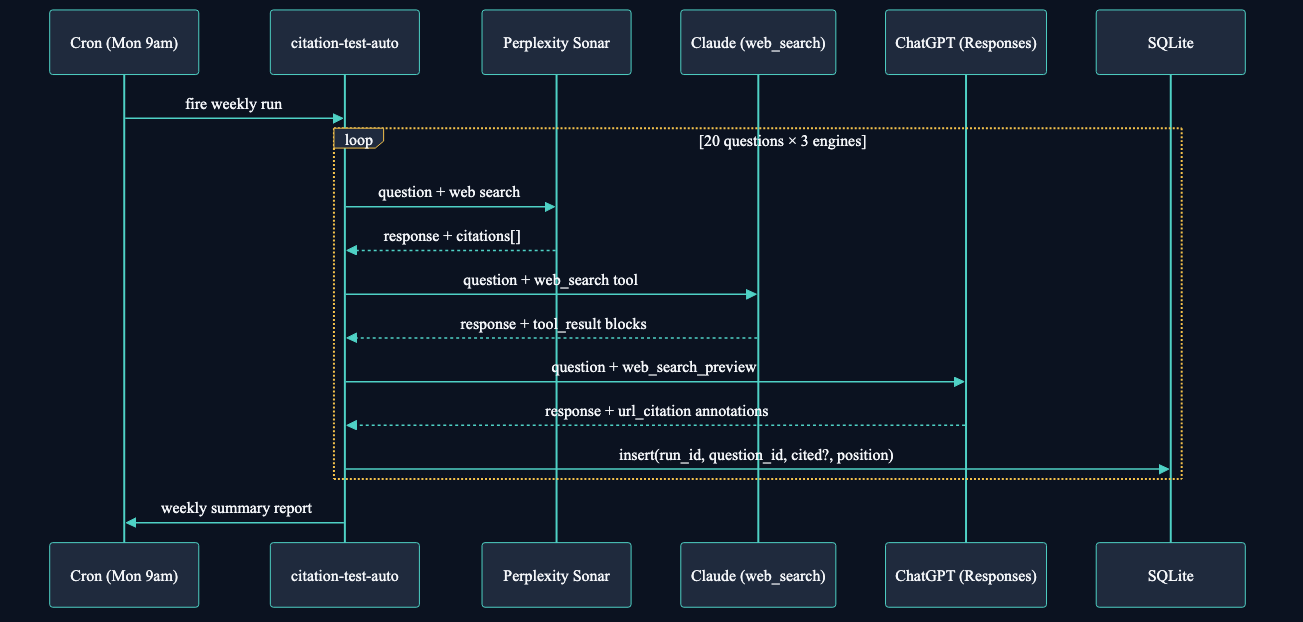

So I built a citation tester. It's a small TypeScript script that hits Perplexity, Claude, and ChatGPT through their APIs, asks each one twenty target questions tied to articles I've already published, and parses the cited URLs from the response. If `astgl.ai` shows up, that's a hit. If it doesn't, that's the data.

The point isn't that the floor is bad. I knew it would be. The point is that without a number, "improve our AEO" is a vibe, not a project. Every Monday at 9am the script runs again, writes a fresh row to a SQLite table, and tells me whether the floor moved. When it does move, I'll know which engine moved first, on which questions, and at what citation position. That's the actual feedback loop.

Same root cause as the hallucination: my content isn't reaching the retrieval surface. Same fix: get it there. Different observability.

Why This Matters

If you write online and you care whether AI assistants represent you accurately, this is the thing to internalize: the alternative to being cited is not being silent. It's being replaced.

Replaced by a confident summary of work you didn't do, opinions you don't hold, and product features you'd never ship. People who ask an LLM about your work and read its answer don't know they're reading fiction. They walk away with a model of you that you didn't write.

The traditional AEO playbook talks about ranking, authority, and citation rate. All real, all worth measuring. But there's a tier underneath that, and it's the one most independent creators are stuck on right now: existence. Until your content is in the index, ranking doesn't apply. You aren't competing with anyone. You're competing with the LLM's imagination of you.

Measurement is the cheapest part of fixing it, and it's the part most people skip.

Quick Reference

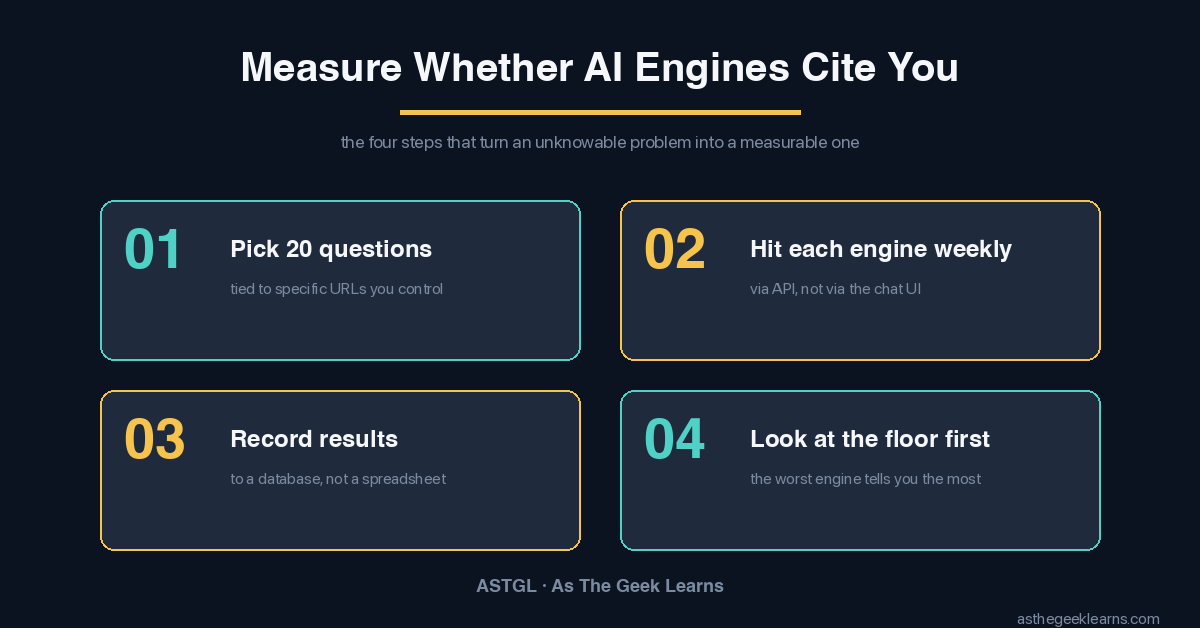

Four things that matter, in order:

1. Pick 20 questions your articles should answer. Tie each one to a specific URL on your site.

2. Hit each engine via API weekly. Perplexity returns a `citations[]` array. Claude returns search results in `web_search_tool_result` blocks. OpenAI returns `url_citation` annotations on `output_text` items.

3. Record the result to a small database, not a spreadsheet. You want trend data, not a snapshot.

4. Look at the floor first. Zero is a fine starting number as long as you're tracking it.

The full script I'm using, including the gotcha where Node's `--env-file` silently dropped my Anthropic key on a fresh keypair, is in the repo. The article about the Anthropic key bug is coming separately.

Found this useful? I share practical lessons from my systems engineering journey at As The Geek Learns